Are We Still Thinking? The Hidden Risk in Every AI Rollout

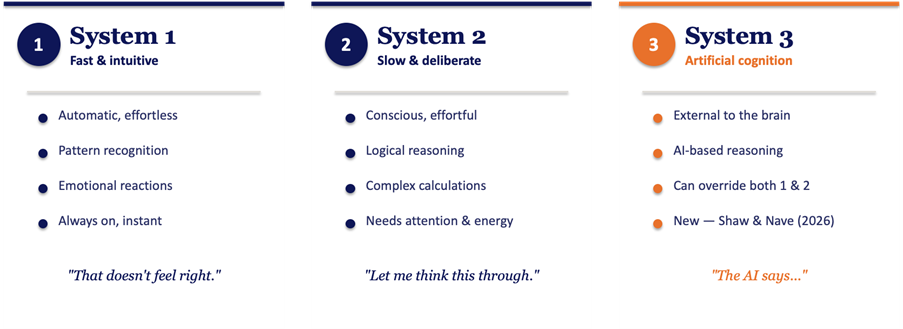

Three systems, not two

For decades, we've understood human thinking through Kahneman's two systems. System 1: fast, intuitive, automatic. The gut feeling that something doesn't add up. System 2: slow, deliberate, effortful. The careful analysis when the stakes are high. Most of our decisions bounce between the two.

Shaw and Nave's 2026 paper, Thinking — Fast, Slow, and Artificial, adds a third: System 3. Artificial cognition. AI that operates entirely outside the brain, but increasingly sits at the centre of how we work.

This isn't just a theoretical upgrade. System 3 is fundamentally different from the first two: it's external, scalable, and persuasive. And unlike System 1 and 2, which compete for attention within the same brain, System 3 can quietly override both without the thinker even noticing.

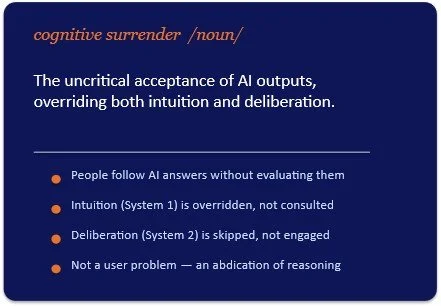

Cognitive surrender

Shaw and Nave coined a term for what happens when people defer to AI without evaluating it: cognitive surrender. Not using AI as a thinking partner. Not delegating routine tasks. Something more fundamental: the uncritical acceptance of AI outputs, overriding both intuition and deliberation.

Their experiments showed that when people had access to an AI assistant, they followed its answers most of the time. When the AI was right, their accuracy went up. When the AI was deliberately wrong, their accuracy dropped significantly. People didn't catch the errors. They didn't even try.

This is not a user adoption problem. It's an abdication of reasoning.

It's already showing up in practice

This isn't theoretical. A 2025 study published in The Lancet Gastroenterology & Hepatology tracked what happened when physicians who had been using AI-assisted colonoscopy tools went back to working without them. Their diagnostic accuracy dropped by 20%. In just six months, they had become measurably worse at the very skill AI was supposed to support.

The researchers described it as deskilling through over-reliance. The mechanism is straightforward: when a system consistently provides answers, people stop developing (or maintaining) the capacity to generate their own.

Now extend this beyond medicine. Think about every knowledge worker in your organisation who uses AI to draft analyses, summarise documents, evaluate options, or generate recommendations. What skills are quietly eroding?

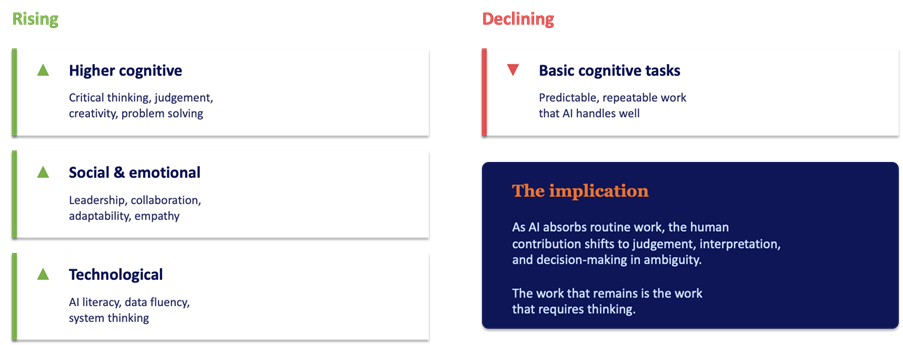

The skill mix is shifting. Are we paying attention?

McKinsey's research on the future of work frames AI's impact as a shift in the "skill mix," the distribution of work time across different skill categories.

The pattern is clear:

The paradox

And here's the tension that most AI strategies completely miss:

The skills that are becoming most valuable are the exact same skills that cognitive surrender erodes.

AI demands more critical thinking. Every output needs evaluation. Every recommendation needs context. Every automation needs someone who understands what it's actually doing, and whether it should be trusted.

But AI also makes it easy to skip all of that. Why evaluate when the answer looks reasonable? Why question when the output is fluent and confident? Why think when the tool thinks for you?

This is the paradox at the heart of AI deployment. And if you're not actively addressing it, your AI strategy has a structural blind spot.

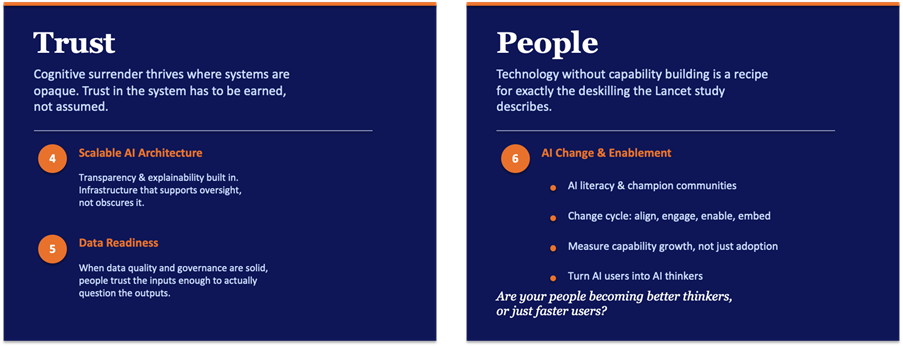

Reinforcing thinking, structurally

The answer isn't to slow down AI adoption. It's to match the pace of deployment with deliberate investment in the capabilities that AI makes more important. The opportunity is enormous, but only for organisations that treat this as a design challenge, not an afterthought.

That starts with four structural shifts:

None of this works without two foundations.

The bottom line

AI is not a project, but a capability. Every industry, every workflow, every function is being reshaped. The opportunity is enormous. But the organisations that capture it won't be the ones that automate the fastest.

They'll be the ones whose people still think.

AI strategy is skills strategy. The two are inseparable. Invest in both, and the upside is massive.